I’ve signed up for Upcloud, a new cloud service provider, and thought I’d put together my initial thoughts into a blog post.

First I, tried to launch trial server with 2GB of RAM, found I could only use 512MB as I was using a free account. That’s fair enough, but still a shame.

On the server creation page, I was presented with a few Linux images I could choose from. I selected Ubuntu 12.04, launched the server and it was available almost instantly.

I logged in as root using supplied password, ran apt-get update and saw using Finnish servers and 140 packages out of date. apt-get upgrade then ran, slowish downloads (<1MB/sec), so took a few minutes to download all the updates, longer than the server took to deploy!

Apt-get upgrade output for download section:

Fetched 134 MB in 2min 47s (800 kB/s)

It then took another few minutes to apply all the updates, as you’d expect, but it does mean an Upcloud server will take a bit longer than you’d think it would to get up and running. There really should be an option to deploy an already updated image.

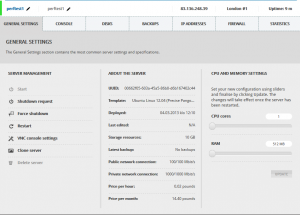

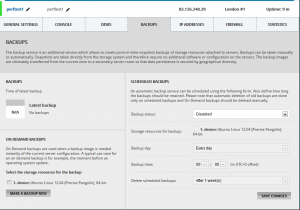

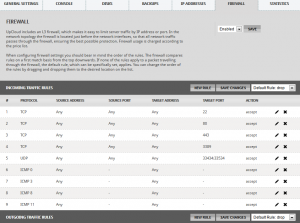

While that was running, I took a look at the various settings screens (see the screenshots), and noticed the network connection speed details:

Public network connection: 100/100 Mbit/s

Private network connection: 1000/1000 Mbit/s

About 10 minutes after I’d created my upcloud account, the server was running and everything was complete and up to date.

I started testing the server by building wordpress following my own 10 million hits a day with wordpress though these days I prefer using MariaDB instead of default MySQL.

When installing MariaDB, I noticed an error saying “Unable to resolve perftest1”, so I checked /etc/hosts and noticed that the file does not get populated with node’s custom hostname, so I added it there manually.

I was unable to run the command to add a trusted key to APT, the command just hung. After some investigation, this is because upcloud block all but a few destination ports from their trial account, which includes the keyserver. Because of this, I just ignored the security warning when installing MariaDB.

After getting WordPress running without caching, I did a simple blitz.io test of 10 – 100 users over 60 seconds. The CPU maxed out immediately, and I ended up aborting the run at around 80 concurrent users. I don’t know if it’s a shared process usage cap from Upcloud that crippled me, or something else (denial of service prevention?) but performance was worse than I’d expect from even an Amazon Micro instance.

Because of this, I moved on to installing the Varnish cache software, and I retried the same blitz of 10 – 100 users over 60 seconds. There were no problems at all, so I retried with 100 – 250 users, no problems again, 2312.9KB/sec peak network output with 250 concurrent users and 0 errors.

The blitz.io results were:

This rush generated 9,768 successful hits in 1.0 min and we transferred 97.97 MB of data in and out of your app. The average hit rate of 150/second translates to about 13,021,426 hits/day.

The average response time was 59 ms.

This suggests to me that the initial problems I had are some kind of CPU cap on shared processor machines. That’s fair enough, but something you need to be aware of if you’re building on them.

I then moved onto doing some network checks. Upcloud don’t mention their location but the IP address I was given to routes into telecity-edge1-1.uk-lon1.ipv4.upcloud.com. Given that Telecity are one of the best data center operators out there, this is a much more positive sign than I expected, I was worried Upcloud were either running in their own very small building, or had outsourced it to the cheapest provider they could find.

SSD performance check

Testing the SSD performance, I ran the fairly standard simple commands:

hdparm -Tt /dev/vda1

/dev/vda1:

Timing cached reads: 4930 MB in 2.00 seconds = 2465.65 MB/sec

Timing buffered disk reads: 454 MB in 3.01 seconds = 150.75 MB/sec

dd if=/dev/zero of=tempfile bs=1M count=1024 conv=fdatasync,notrunc

1073741824 bytes (1.1 GB) copied, 4.32663 s, 248 MB/s

Next, I cleared the buffer-cache to accurately measure read speeds directly from the device:

echo 3 > /proc/sys/vm/drop_caches

dd if=tempfile of=/dev/null bs=1M count=1024

1073741824 bytes (1.1 GB) copied, 6.89949 s, 156 MB/s

Now that the last file is in the buffer, repeat the command to see the speed of the buffer-cache:

dd if=tempfile of=/dev/null bs=1M count=1024

1073741824 bytes (1.1 GB) copied, 6.56313 s, 164 MB/s

I’m pretty happy with 150MB/sec, a comparable run on an SSD based server I use with Hetzner gets a very similar result of 170MB/sec.

Payment

I manually made a payment of £10, the process was as simple as you get, and was immediately credited to my account, no hanging around, no telephone authorisation steps, etc.

Resizing the server

I decided to upgrade the server to 2GB of RAM, so I could test a dual core machine with 4GB of RAM. I had to shutdown the server before resizing it, but the process itself was immediate. In the future it would be good if you could specify the new size, then trigged an automatic reboot to enable the resizing.

On reboot, I realised I had lost the root password (silly mistake by me!), and there’s no “System Recovery” option, just a VNC console. So I opened the console, rebooted the server, and used grub to enter single user mode, which asked for a root password… I’m sure there’s a way around this, but it escapes my memory for now, so I simply deleted that server and build a second one.

The new larger server came up straight away, I re-installed MariaDB, Nginx, and WordPress as before, and re-ran the blitz test. CPU usage was still 100% after about 50 users, but the response time remained under my defined limit of 5 seconds throughout the test, and no errors were created, a significant improvement over the shared processor server, and a very good result for uncached and untuned WordPress in general!

I then re-installed Varnish, and tested 100 – 500 users over 60 seconds, and was impressed with the network throughput, with no errors and an average response time of 97ms. While that server would be relatively expensive at £53 a month plus bandwidth charges, the performance was very good when compared to a lot of the other cloud providers I’ve tried out.

Conclusion

There’s lots more to picking an IaaS provider than a few quick benchmarks (resilience, on going support, distributed data centers, price, and so on, all come to mind), but I like the fact that Upcloud are taking a different approach of mixing the open-source KVM hypervisor with high-end equipment (the Infinband connects are not going to be cheap).

It may not be the approach for everyone, and it’s not what Amazon are doing, but there’s nothing wrong with looking at things differently, especially if you’re much smaller than your main competition.